Q-Learning Agents

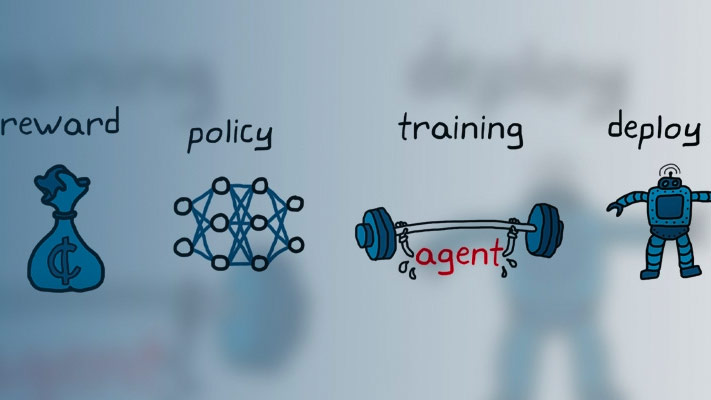

The Q-learning algorithm is a model-free, online, off-policy reinforcement learning method. A Q-learning agent is a value-based reinforcement learning agent that trains a critic to estimate the return or future rewards.

For more information on the different types of reinforcement learning agents, seeReinforcement Learning Agents。

Q-learning agents can be trained in environments with the following observation and action spaces.

| Observation Space | Action Space |

|---|---|

| 连续或离散 | Discrete |

Q agents use the following critic representation.

| Critic | Actor |

|---|---|

Q-value function criticQ(S,A), which you create using |

Q agents do not use an actor. |

During training, the agent explores the action space using epsilon-greedy exploration. During each control interval the agent selects a random action with probabilityϵ, otherwise it selects an action greedily with respect to the value function with probability 1-ϵ。This greedy action is the action for which the value function is greatest.

Critic Function

To estimate the value function, a Q-learning agent maintains a criticQ(S,A), which is a table or function approximator. The critic takes observationSand actionAas inputs and returns the corresponding expectation of the long-term reward.

For more information on creating critics for value function approximation, seeCreate Policy and Value Function Representations。

When training is complete, the trained value function approximator is stored in criticQ(S,A).

Agent Creation

To create a Q-learning agent:

Create a critic using an

rlQValueRepresentationobject.Specify agent options using an

rlQAgentOptionsobject.Create the agent using an

rlQAgentobject.

Training Algorithm

Q-learning agents use the following training algorithm. To configure the training algorithm, specify options using anrlQAgentOptionsobject.

Initialize the criticQ(S,A) with random values.

For each training episode:

Set the initial observationS。

Repeat the following for each step of the episode untilSis a terminal state.

For the current observationS, select a random actionAwith probabilityϵ。否则,选择评论家价值功能最大的动作。

To specifyϵand its decay rate, use the

EpsilonGreedyExplorationoption.Execute actionA。Observe the rewardRand next observationS'。

IfS'is a terminal state, set the value function targetytoR。Otherwise, set it to

To set the discount factorγ, use the

DiscountFactoroption.Compute the critic parameter update.

Update the critic using the learning rateα。

Specify the learning rate when you create the critic representation by setting the

LearnRateoption in therlRepresentationOptionsobject.Set the observationStoS'。