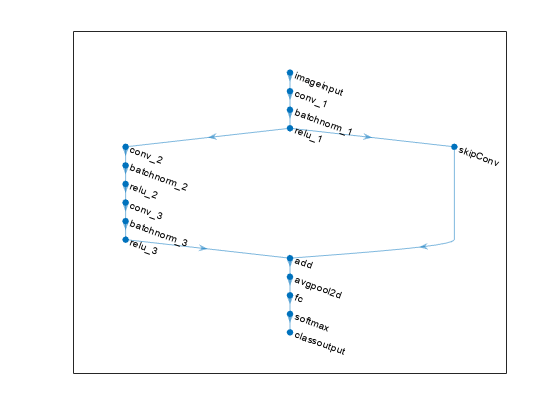

layerGraph

深度学习的网络层图

Description

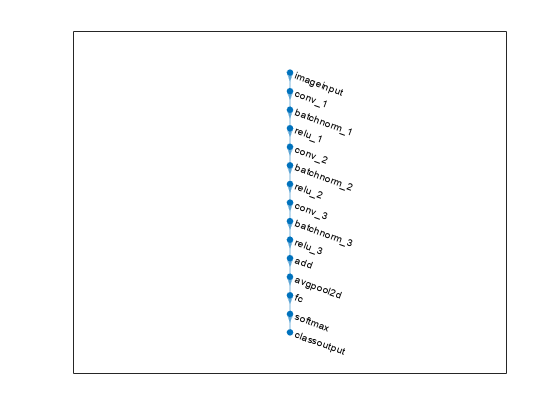

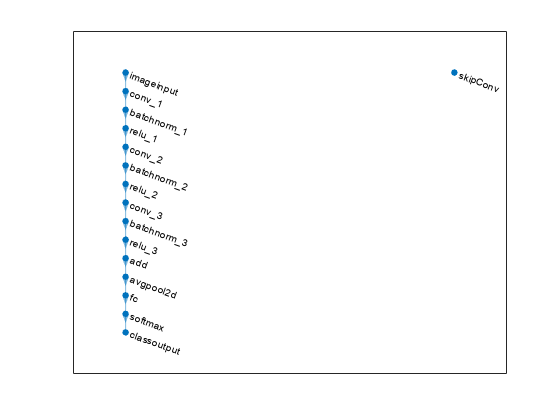

A layer graph specifies the architecture of a deep learning network with a more complex graph structure in which layers can have inputs from multiple layers and outputs to multiple layers. Networks with this structure are called directed acyclic graph (DAG) networks. After you create alayerGraphobject, you can use the object functions to plot the graph and modify it by adding, removing, connecting, and disconnecting layers. To train the network, use the layer graph as input to thetrainNetworkfunction or convert it to adlnetworkand train it using a custom training loop.

Creation

Description

lgraph= layergraphaddLayersfunction.

lgraph= layergraph(layers)Layersproperty. The layers inlgraphare connected in the same sequential order as inlayers.

Input Arguments

Properties

Object Functions

addLayers |

Add layers to layer graph |

removeLayers |

Remove layers from layer graph |

replaceLayer |

Replace layer in layer graph |

connectLayers |

Connect layers in layer graph |

disconnectLayers |

Disconnect layers in layer graph |

plot |

Plot neural network layer graph |